What is this guide?

This is for those wanting to setup Rancher on your K3s cluster that is running on a network where users from the public internet can't access it. In my instance, that means running my cluster on a separate subnet of my home network. I opted to keep this cluster internal only because I don't run anything I would want to access outside my home at the moment. If you are okay with opening holes in your firewall, enabling port forwarding, etc. to get you cluster accessible to the outside world then this guide may not be for you.

Here is a quick guide to setting up Rancher on the same cluster as your K3s installation.

Before we begin, I want to quickly shoutout the guides from NetworkChuck and GitHub User kopwei that I used as a starting point.

Setting Up K3s

1 - Set up your SD Cards

- Install PiOS lite using the Raspberry Pi Imager

2 - Init your PiOS

- Put SD cards in your RPi

- Turn On

- Wait ~5-10 minutes

- Turn Off

- Take out SD cards

3 - Enable the needed settings.

Take your initialized RPi SD cards and for each one, insert into your reader and edit the following files on the boot partition.

Replace the stuff in angle brackets with your values

cmdline.txt

cgroup_memory=1 cgroup_enable=memory ip=<static-ip-request>::<dhcp-server-address>:<subnet mask|255.255.255.0>:<hostname>:eth0:off

config.txt

In the all key of this config file add the arm64 flag. It should look like the below.

[all]

arm_64bit=1

4 - Put SD card back into your RPi and boot up.

I've had the hostname not change despite changing it with the line in the cmdline.txt file. If that happens you can edit it by using the sudo raspi-config tool.

Enable Legacy IP Tables

The latest version of Raspberry PiOS doesn't have legacy IP tables installed. You can install it by running

sudo apt install iptables

Once you have that installed you can run this:

sudo iptables -F;

sudo update-alternatives --set iptables /usr/sbin/iptables-legacy;

sudo update-alternatives --set ip6tables /usr/sbin/ip6tables-legacy;

sudo reboot

Install K3s

Enter sudo mode

sudo su -

Run on your master node

curl -sfL https://get.k3s.io | K3S_KUBECONFIG_MODE="644" sh -s

Get your access token by following the instruction in the output of your master node install step.

Run this to set up your worker nodes

curl -sfL https://get.k3s.io | K3S_TOKEN="<your-token>" K3S_URL="https://<your-master-node-ip>:6443" K3S_NODE_NAME="<node-name>" sh -

On the master node, run kubectl get nodes to check for your node status and watch them join.

W00t! You should have your k3s installed and running.

First off, I recommend getting your kubectl config object and loading it into your ~/.kube/config so you can run helm and kubectl commands from your local machine. You can do that by copying your /etc/rancher/k3s/k3s.yaml and editing the server key in the file to be the IP or address as accessed from your local machine. It should probably be what you're using to SSH into your master node.

Example below

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: <cert-data>

server: https://127.0.0.1:6443 <-- EDIT THIS

name: default

contexts:

- context:

cluster: default

user: default

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: default

user:

client-certificate-data: <data>

client-key-data: <data>

Install Cert-Manager

Follow the Cert-Manager install instructions for in-depth instructions. I'm just going to share what I did.

Add helm repo

helm repo add jetstack https://charts.jetstack.io

helm repo update

Install

helm install cert-manager jetstack/cert-manager \

--namespace cert-manager \

--create-namespace \

--version v1.6.1 \

--set installCRDs=true

Use kubectl get pods -n cert-manager to verify the pods are running before moving on

Install Rancher

Again, you can follow the instructions on the Rancher website if you want more in-depth discussion on what is going on. I'm just going to share what I did.

Add helm repo

helm repo add rancher-stable https://releases.rancher.com/server-charts/stable

helm repo update

Create namespace

kubectl create ns cattle-system

Install

helm install rancher rancher-stable/rancher \

--namespace cattle-system \

--set hostname=<your hostname> \

--set bootstrapPassword=admin \

--set ingress.tls.source=letsEncrypt \

--set letsEncrypt.email=<your email> \

--set rancherImageTag=v2.6.2-linux-arm64

From here, this is where you have to start playing with things...

If you have kubens I'd recommend switching context now. If not, you'll want to make sure you're adding the -n cattle-system flag to your kubectl commands in the rest of this post.

kubens cattle-system

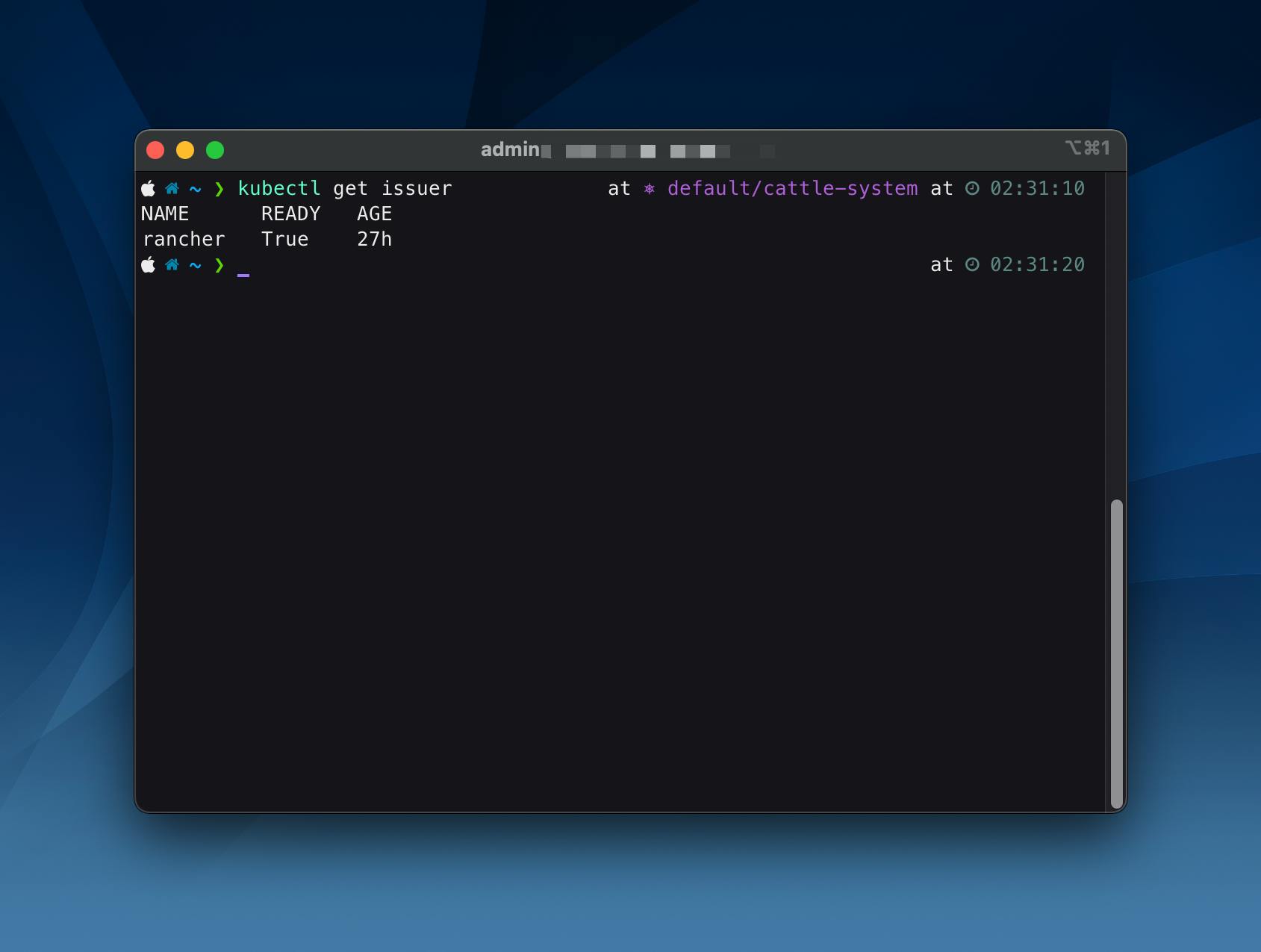

Check for an issuer

kubectl get issuer

You should see

You'll want to edit that Issuer to use the dns01 challenge. Here is an example of what I did for the DigitalOcean networking.

Create secret in the namespace

Secret file

apiVersion: v1

kind: Secret

metadata:

name: digitalocean-dns <- This can be whatever you want to name it

data:

access-token: "<base64 encoded secret>"

Save the above into a file and then create it on the cluster

kubectl create -f <path/to/secret-file.yaml>

Edit Issuer

I used kubectl edit issuer rancher

apiVersion: v1

items:

- apiVersion: cert-manager.io/v1

kind: Issuer

metadata:

annotations:

meta.helm.sh/release-name: rancher

meta.helm.sh/release-namespace: cattle-system

creationTimestamp: "2021-12-31T03:56:49Z"

generation: 2

labels:

app: rancher

app.kubernetes.io/managed-by: Helm

chart: rancher-2.6.3

heritage: Helm

release: rancher

name: rancher

namespace: cattle-system

resourceVersion: "45795"

uid: <uid>

spec:

acme:

email: [redacted]

preferredChain: ""

privateKeySecretRef:

name: letsencrypt-production

server: https://acme-v02.api.letsencrypt.org/directory

solvers:

- dns01: <== REMOVE http01 and replace with dns01 solver

digitalocean:

tokenSecretRef:

key: access-token

name: digitalocean-dns

From there, you should only need to find the name of the certificate using kubectl get certificate and then delete it using kubectl delete certificate <name>. Once you delete it, cert-manager will create a new certificate and certificate request and you'll be able to access your rancher portal by using the hostname you used the path /dashboard/?setup=<bootstrap password used>

Conclusion

So, what did we do?

We edited the rancher certificate issuer to use an ACME DNS01 solver instead of the default HTTP01 solver. Rancher only runs with HTTPS mode so we have to find a way to prove to Lets Encrypt that we control the domain we claim to control. I have DigitalOcean configured to handle the external DNS for my domain. Because of that, I was able to pass an access token to Cert Manager so they can create the TXT records needed to prove I own the domain. You should be able to use any of the setup method described in the DNS01 section of the ACME Cert-Manager docs.